As the application of da Vinci robotic surgery in gynecological treatment continues to mature, surgical precision has significantly improved. However, it has also increased the pressure on surgeons to make critical decisions within limited timeframes. To assist physicians in more accurately identifying organs and lesions, Distinguished Professor Jing-Ming Guo from the Department of Electrical Engineering at Taiwan Tech led a research team from the Advanced Intelligent Image and Vision Technology Research Center. In collaboration with Dr. Yu-Chi Wang and her team from the Department of Obstetrics and Gynecology at Tri-Service General Hospital, they developed an “AI-Powered Gynecological Surgery: Automatic Annotation Clinical Decision Support System”. This system utilizes AI and real-time image recognition to assist surgeons during minimally invasive gynecological procedures, reducing the risk of accidental organ damage while improving surgical safety and healthcare quality. The research has also received the 22nd National Innovation Award (Academic and Research Innovation Award).

The team led by Professor Jing-ming Guo and Dr. Yu-Chi Wang developed the “AI-Powered Gynecological Surgery: Automatic Annotation Clinical Decision Support System,” which won the 22nd National Innovation Award (Academic and Research Innovation Award). The technology has now been transferred to IMVITec Corporation for commercialization and clinical application. From left to right: Professor Meng-Yi Pai (Graduate Institute of Biomedical Engineering, Taiwan Tech), Chairman Wen-Zheng Zhang of IMVITec Corporation , Distinguished Professor Jing-Ming Guo, and Dr. Yu-Chi Wang from Tri-Service General Hospital.

Professor Jing-Ming Guo noted that although current da Vinci surgeries offer high-resolution imaging and precise, stable operation, they still lack tactile feedback, and the identification of organs and critical tissues relies heavily on the surgeon’s experience. This poses potential risks, especially for less experienced surgeons or complex procedures. Therefore, the research team collected real clinical gynecological da Vinci surgical images, which were annotated by professional physicians and used to train and optimize deep learning models.

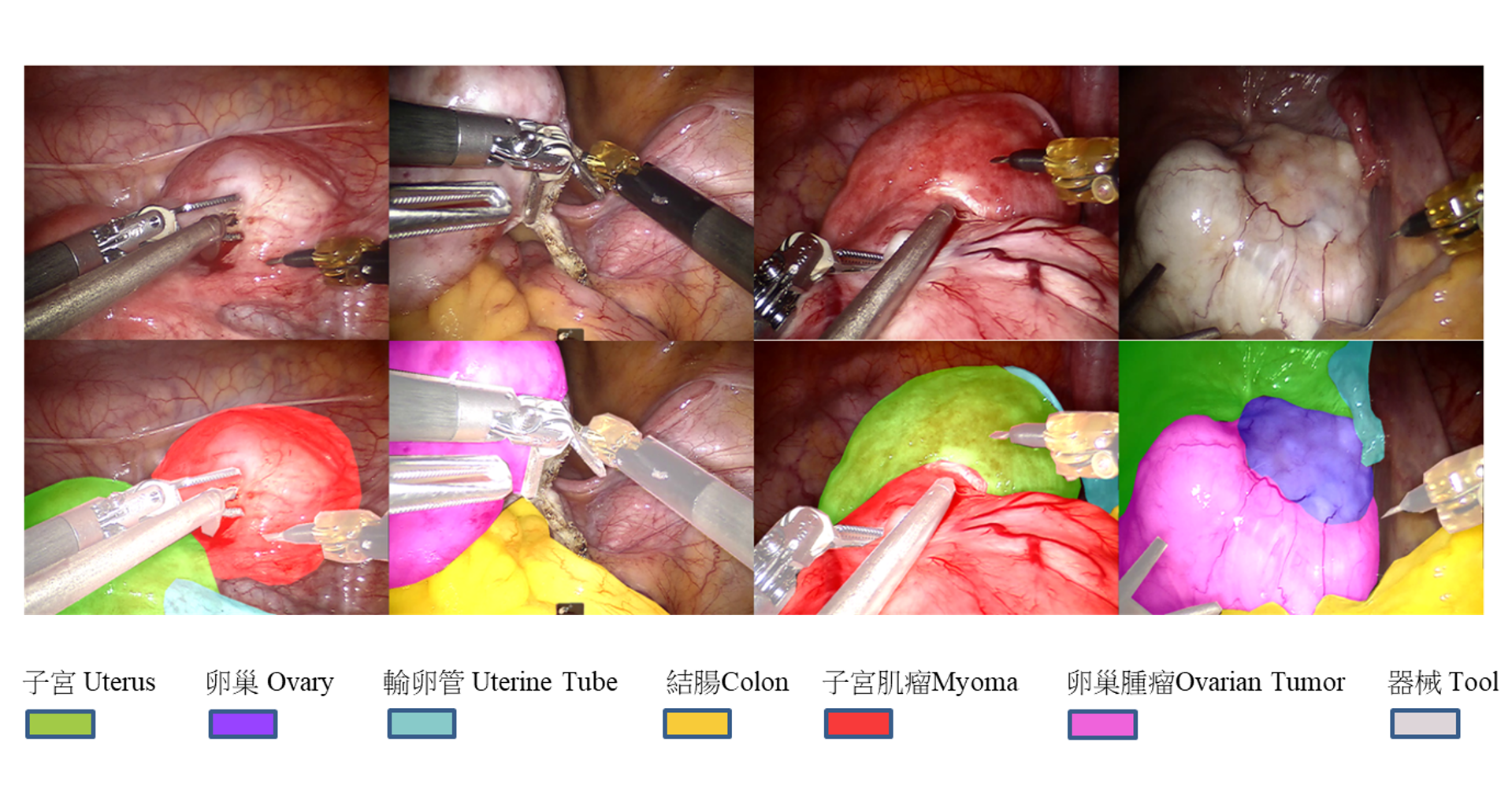

The team independently developed an “Organ Image Segmentation System (DeepVinci)” using deep semantic segmentation technology. During surgery, the system performs real-time recognition and clearly marks key tissues - such as the uterus, ovaries, fallopian tubes, colon, and tumors - using color-coded overlays. This assists surgeons in identifying organ and tumor boundaries during dissection and resection, helping reduce the risk of accidental injury. It establishes a safer and more visualized support mechanism for minimally invasive gynecological surgery, thereby lowering the likelihood of medical disputes.

The team’s self-developed “Organ Image Segmentation System (DeepVinci)” performs real-time recognition during surgery and clearly marks tissues such as the uterus, ovaries, fallopian tubes, colon, and tumors with color overlays, helping surgeons identify boundaries and reduce the risk of accidental injury.

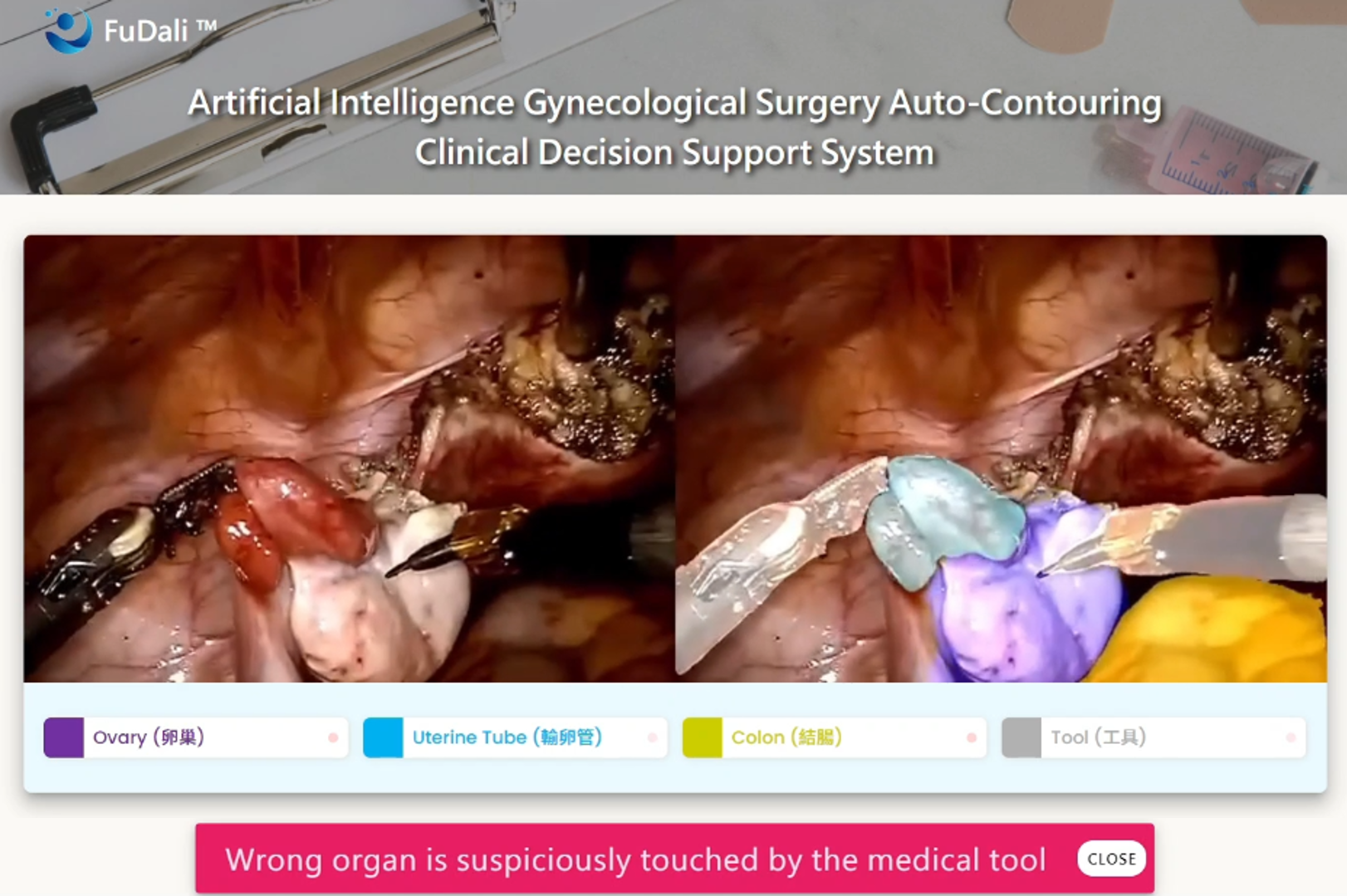

In terms of performance, the system can process surgical image data at 30 frames per second in real time, achieving an organ detection sensitivity of up to 90%. Professor Jing-Ming Guo emphasized that the goal of this technology is not to replace surgeons, but to serve as a “second pair of eyes” during procedures - especially around critical structures such as blood vessels and ureters—providing real-time visual alerts.

Currently, the system has achieved near real-time image update speeds compatible with clinical surgical workflows. To ensure reliability across various conditions, the research team has customized adjustments based on different surgical scenarios and accumulated over 100 surgical cases as training and testing data. Moving forward, they will continue optimizing the model’s performance under extreme conditions, such as varying lighting, heavy bleeding, or visual obstruction, to enhance both response speed and recognition stability.

The system can also be integrated into surgical platforms to provide real-time identification of key tissues, with alerts triggered when surgical instruments approach critical organs.

As the system’s performance continues to mature, the team has found that the core technology of the organ image segmentation system is highly adaptable. With sufficient high-quality medical imaging data, the model can be transferred and retrained for broader applications, including other endoscopic procedures, general surgery, and various minimally invasive surgeries. It can even be extended to medical imaging analysis such as MRI and CT scans.

In addition, the system can incorporate tracking and analysis of surgical instrument trajectories for post-operative workflow evaluation and quality feedback. It may also be integrated with AR and VR technologies to develop digital twin platforms, helping train younger surgeons in da Vinci procedures through simulation, thereby improving training efficiency and clinical proficiency.

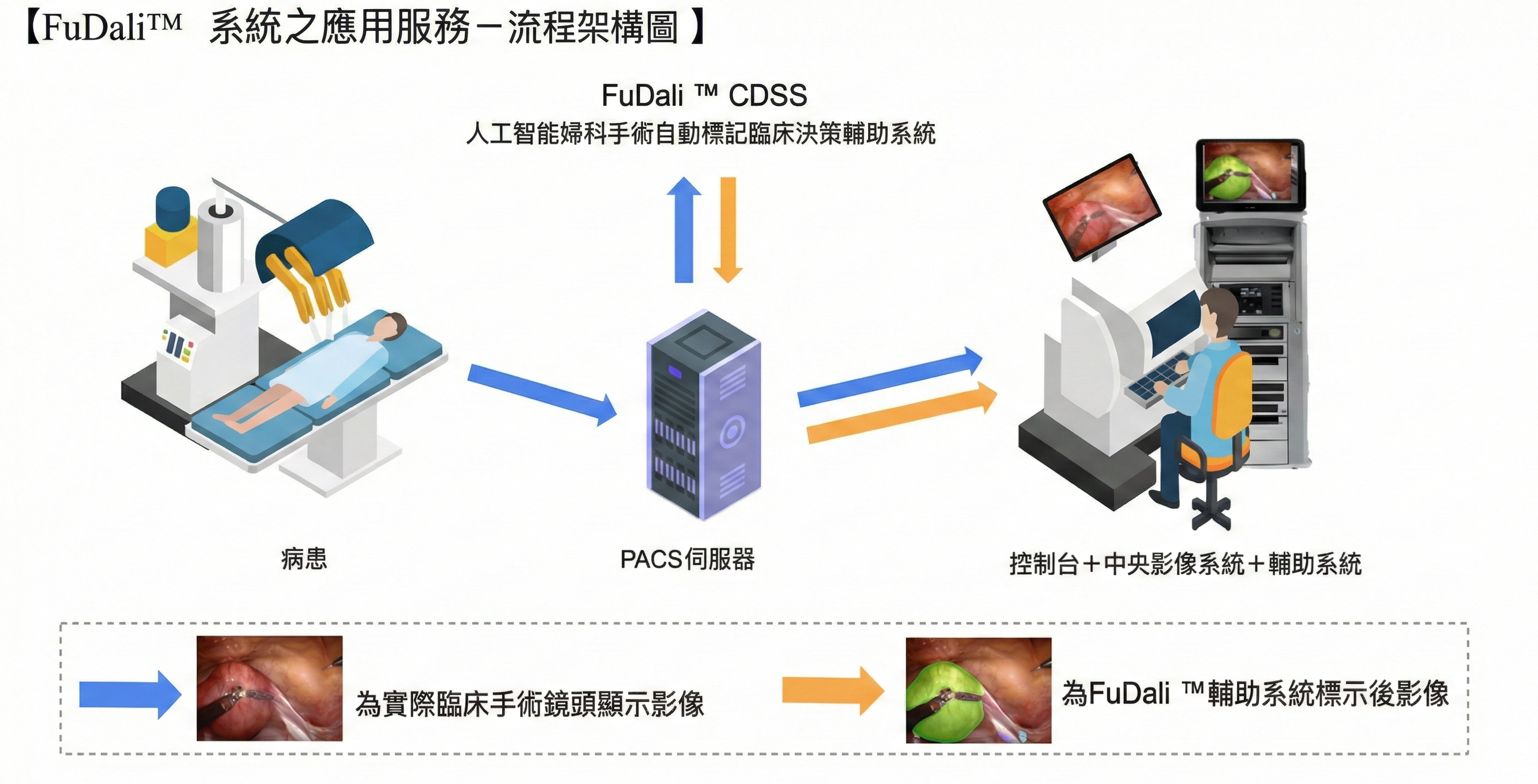

The research team plans to integrate the AI-powered gynecological surgery automatic annotation clinical decision support system into the PACS server used in da Vinci surgeries. Through dual screens or split-screen displays, real-time surgical images and AI-based organ annotations can be shown simultaneously, enhancing diagnostic efficiency and accuracy.

The team has completed technology transfer, with IMVITec Corporation responsible for product development and market promotion. Clinical validation and regulatory certification are ongoing as the system is gradually introduced into real-world medical settings. Professor Jing-Ming Guo stated that AI-assisted surgical systems will become essential tools for surgeons in the future, helping reduce experience gaps and improve clinical decision-making quality - bringing smart healthcare beyond research and into the operating room as a vital support for surgeons.